Anthropic vs the Pentagon

Forecasting the standoff

WarClaude

When pollster David Shor investigated the favorability of Anthropic late last year, he found that they had about the same name recognition as a fictional AI company, “Apex Logic.”

Well, now they’re trending towards becoming a household name, but it might not be for the reasons they were hoping.

After it came out that the Department of Defense had made use of Anthropic’s offerings during the raid on Maduro, there was understandably some internal blowback within Anthropic on potential misuse of Claude. Through a sequence of miscommunications between Anthropic and Pentagon, this escalated into a dispute over whether the US military could compel Anthropic into allowing unfettered use of their AI tools.

To those tuned into Anthropic’s approach to constitutional alignment, this might seem like an impossible ask. It’s quite plausible that even without direct action on the part of Anthropic to deny US military uses, Claude could reject certain tasks due to its internal “moral compass.” The US government isn’t used to dealing with tools that can take their own initiative to reject certain uses. On top of this, Anthropic prides itself on careful and deliberate ethical restrictions, which clashes with potential DoD use cases such as… y’know… surveillance, guided weaponry, espionage, or fomenting coups.

In any case, after a couple of meetings between Anthropic CEO Dario Amodei and DoD Secretary Pete Hegseth, the situation has come to a head. The military has given Amodei and Anthropic until 5:01pm on Friday to remove certain restrictions (which may or may not be possible to do). They have threatened several possible actions:

Cutting ties with Anthropic and ending their contracts for the use of its products.

Declaring Anthropic a “supply chain risk” and mandating other military contractors to abandon their use of Anthropic’s offerings.

Invoking the Defense Production Act to compel Anthropic to produce a restriction-free version of Claude (which some have called “WarClaude”)

As Dean Ball, one of the authors of the administration’s AI action plan, has noted, these threats appear paradoxical. Either Anthropic is such a supply chain risk that no one should use it, or they’re so essential that they should be compelled to become an essential part of the military supply chain.

As a result, forecasting this situation is challenging. There aren’t clear delineations between the various actions that the Trump administration may take, and it’s possible that several actions may be taken, rescinded, or modified by the time the dust settles.

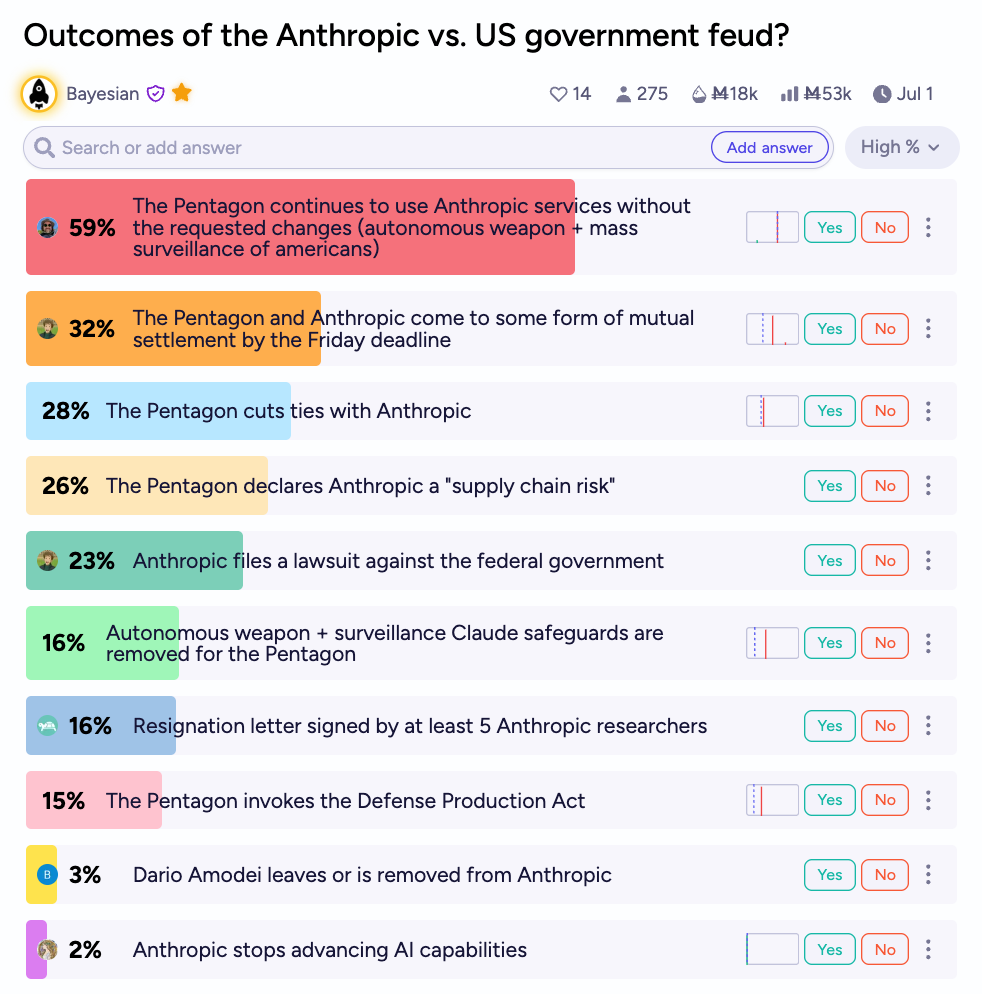

Manifold traders think the most likely outcome is something resembling “business-as-usual” after the clash: ~60% odds to Anthropic not making the requested changes. For what it’s worth, the Pentagon seems to have denied they have even made these explicit requests, contra previous reporting from Axios and others.

Despite this, traders only give about 1 in 3 odds that the Pentagon and Anthropic will reach a mutual settlement by the Friday deadline.

Traders think that some of the more escalatory outcomes are between 20 and 30% likely to occur: the Pentagon cutting ties with Anthropic, declaring them a supply chain risk, or Anthropic pursuing legal action. An invocation of the Defense Production Act, despite threats from the administration, is at only a 15% chance to occur.

The market also is skeptical that Anthropic will actually remove any safeguards (if these safeguards are something that can be removed). Suspiciously, the probability for that action is quite similar to the probability that multiple Anthropic researchers resign over this affair.

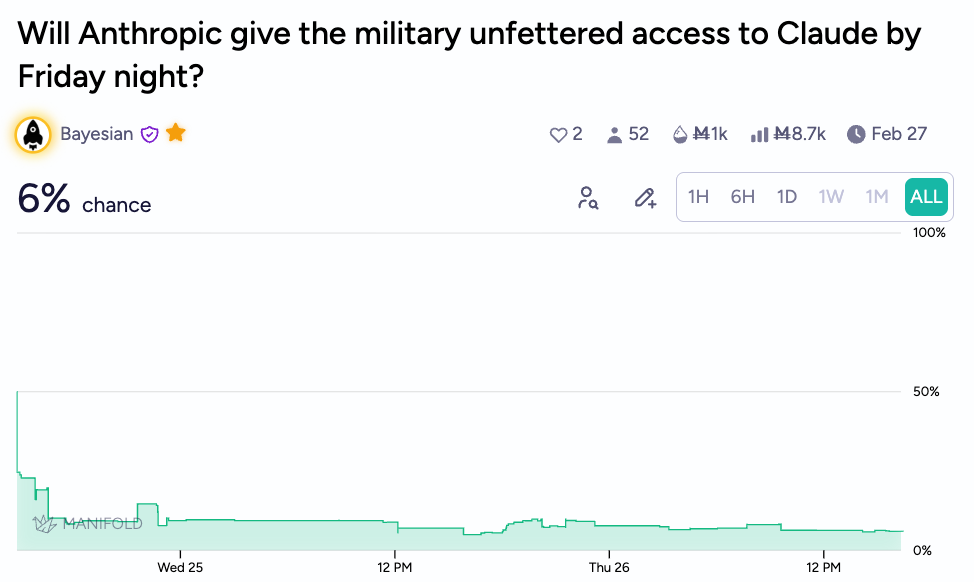

A separate market on specifically whether Anthropic will give the military “unfettered access” to Claude by their deadline is trading at just 6%:

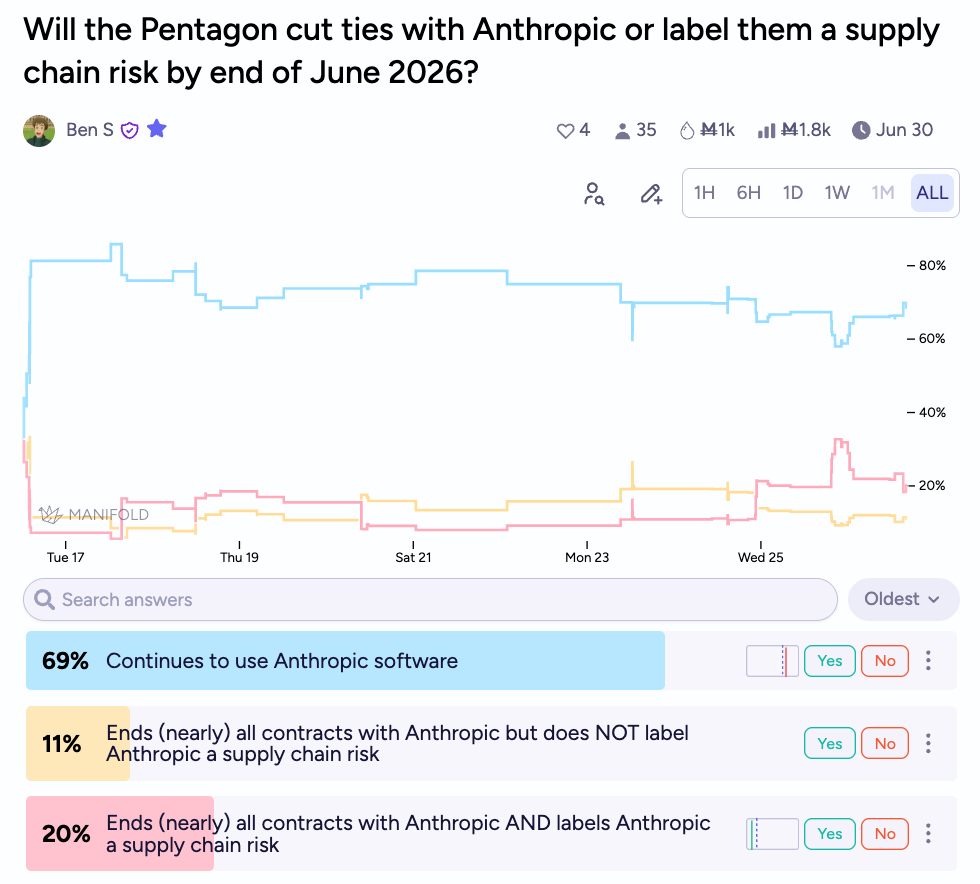

Another market forces traders to pick the closest of three options for the Pentagon: continuing to use Anthropic’s softare, ending their contracts, and ending their contracts and additionally labeling Anthropic a supply chain risk.

Here we see about 70% odds that Anthropic continues to contract with the DoD, with traders believing that conditional on the DoD ending their contracts with Anthropic, it is likely that they also take the step of labeling them a supply chain risk, a label generally reserved for the companies of foreign adversaries!

Change Comes for Anthropic

It’s probably good to contextualize these events within the larger shifts happening at Anthropic. As the company positions itself as perhaps the leader in the AI race, with their current frontier model remaining slightly ahead of competitors by most expert assessments, they are also hoping to ensure a safe technological transition. Recent profiles on the company, including on their head of alignment, Amanda Askell, have focused on their dueling motivations. On one hand, Anthropic is racing to maximize earnings and secure sizable investments; on the other hand, they have clear goals set by their employees and executives —and indeed their very structure as a public benefit corporation — to ensure beneficial societal impacts.

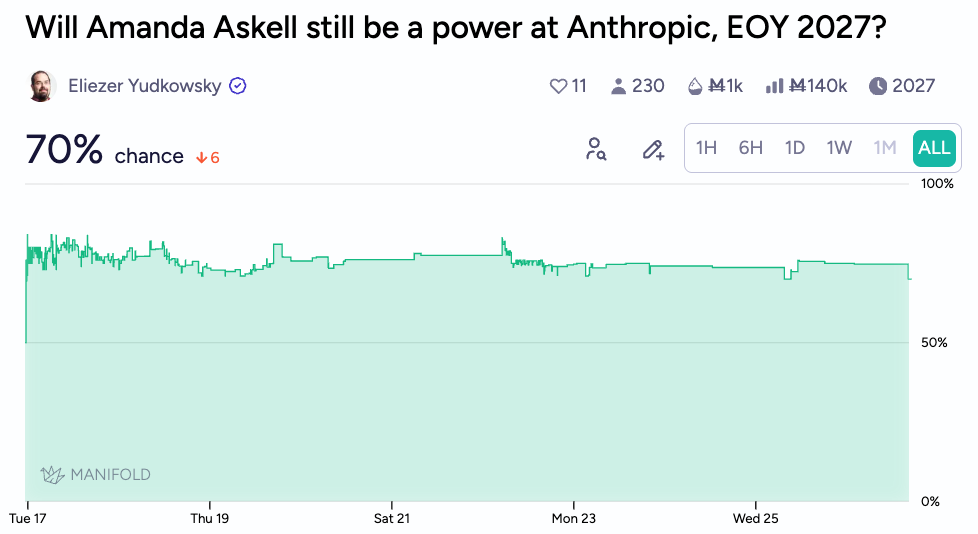

Manifold users are very interested on how this will affect the near future for Anthropic, as they grow in size to rival OpenAI. One proxy could be this market on whether their head of alignment, Amanda Askell will remain in a position of leadership at the company through the end of next year.

Having goals that are perceived as orthogonal to the current US administration will put them into tension with the government, and it’s indeed possible that the publicity around Anthropic’s idiosyncratic ethical framework was what led to their current fight with Pentagon leadership.

Anthropic recently revised their “responsible scaling policy,” triggering a round of discourse on how companies should adjust their prior commitments when those commitments have become less practical or relevant. Some people have praised their transparency in amending the document, while others have called it out for no longer upholding tenets from prior versions.

Zvi Mowshowitz has recently created a market on whether Anthropic’s responsible scaling policy will be further revised over the next 3 years, with changes larger than its revisions in the current version. Traders give it 2 in 3 odds to occur.

Frontier Claude

Why is Anthropic attracting such attention from multiple sectors? Well, as even Pete Hegseth has reportedly said, they’re simply the best right now.

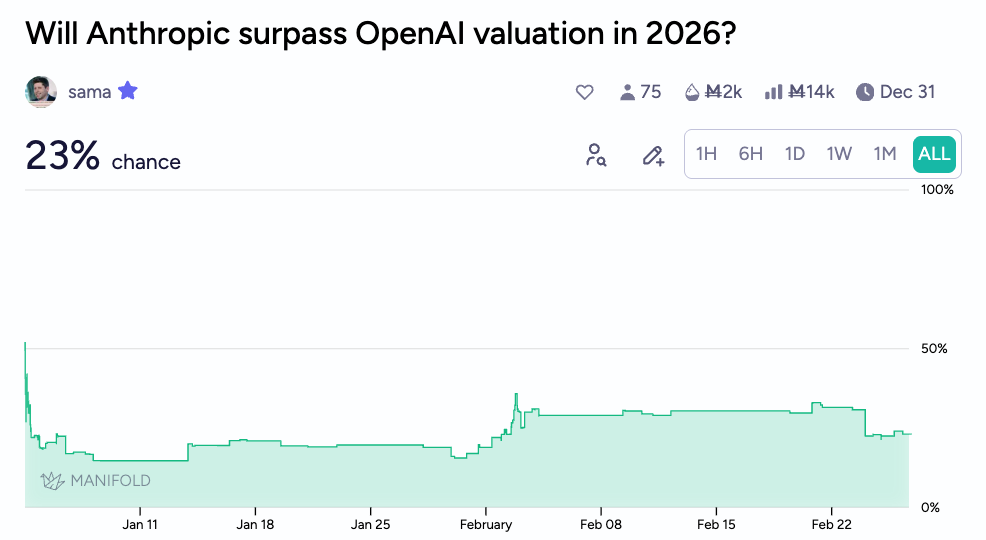

Despite having only ~1 in 4 chance to surpass OpenAI’s valuation this year (depending on how you look at it, that could actually be quite high!), Anthropic appears more narrowly focused than their competitors on developing highly intelligent, frontier models.

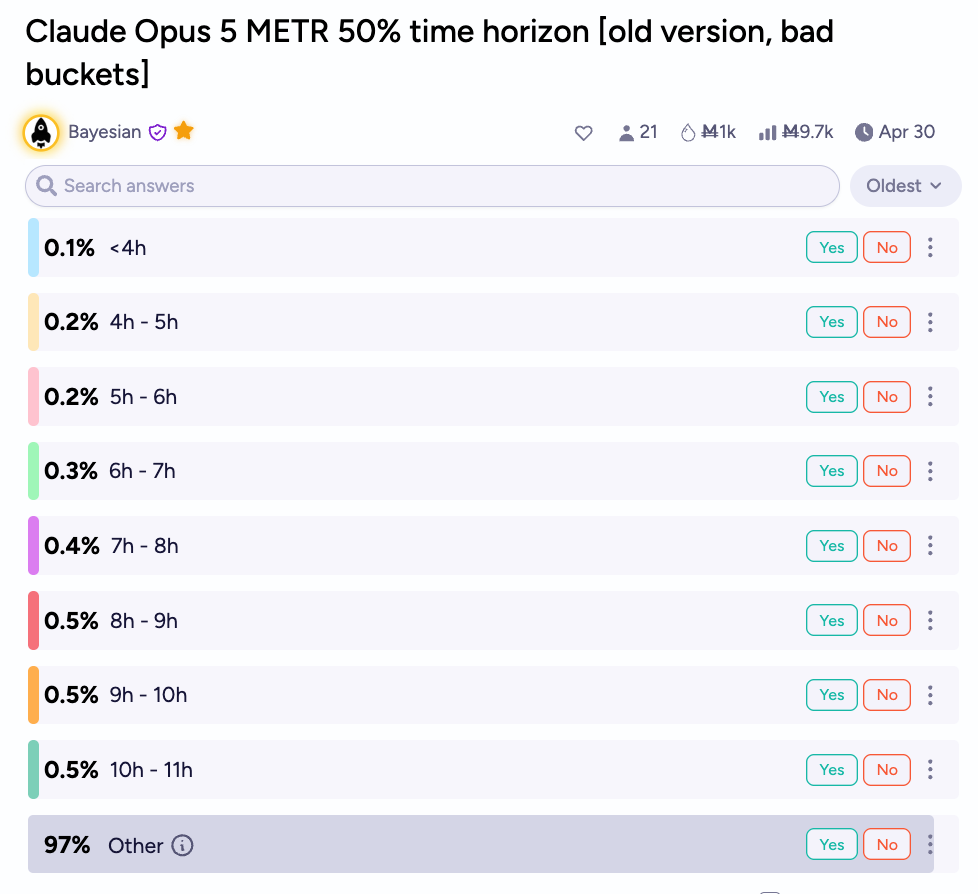

They recently released Claude Opus 4.6, which beat its benchmarks so hard that METR time horizon questions on successor models, that had buckets made before its release, now look like this:

Opus 4.6’s METR time of over 14 hours blew away projections to the point that it might have been a trigger for the recently viral Citrini article on AGI economics (personal speculation here), and was certainly a trigger for endless exponential curve projections on Twitter.

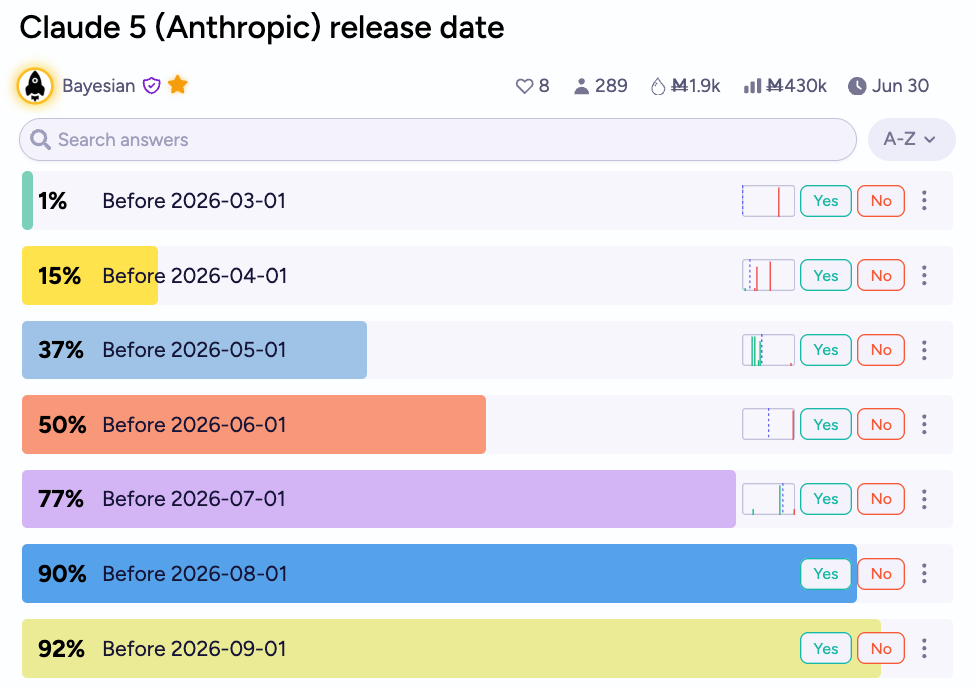

With top labs keeping up a blistering pace, traders expect Claude 5 (perhaps both Opus and Sonnet) to be released between April and July.

Happy Forecasting!

-Above the Fold

Thanks for the coverage. I’m curious. What’s the model by which publicity about Anthropic’s ethics would generate conflict with DoD, rather than the dispute simply reflecting substantive disagreements over permissible uses?